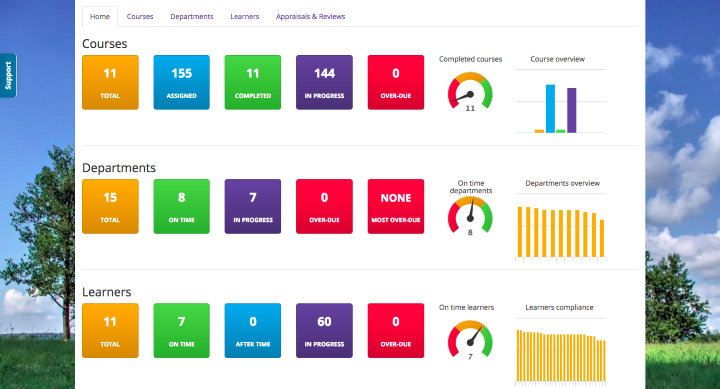

At their recent user group meeting, Alliance member Create eLearning asked us to look to the future and ideate about what's next for the LMS. Create themselves are no slouches when it comes to developing their system – they have recently added the facility to integrate webinars into courses, whilst their dashboard makes it easy to review the course & student data the system produces. And it was from here that our discussions moved to “big data” and how this fits with developing courses and helping our students to achieve better outcomes.

The "big data" of the L&D world is more commonly known as “learning analytics”. As with all big data, learning analytics is becoming big business, so whilst we may have an idea about what learning analytics is; do we know who is actually doing it, what the data is being used for and how it may affect what we offer in the future?

To get a proper handle on learning analytics, we can take a quick look at wikipedia. It gives us "the measurement, collection, analysis and reporting of data about learners and their contexts, for purposes of understanding and optimising learning and the environments in which it occurs”. By referring to the Open University, we get a bit more "the use of raw and analysed student data to proactively identify interventions which aim to support students in achieving their study goals" ... "All data captured as a result of the University’s interaction with the student has the potential to provide evidence for learning analytics."

The growing use of "on-line" helps massively with collecting data about what learners are doing and how they're doing it. In fact, UNESCO have noted that learners are leaving ever increasing "digital traces", and it's these “traces” that give us the opportunity to improve our learning interventions and student outcomes.

Learning analytics then is about gaining a better understanding of the learning and teaching process by interpreting student & course data so we can “proactively” improve our learners chances of success. This may involve direct intervention with the student, or perhaps improvements to your courses.

Increasingly, we're seeing analytics being incorporated into LMSs, with dashboards appearing (such as the one in the Create eLearning system pictured below) to help administrators interpret the data collected. As the market for learning analytics grows, so too, we believe, will the analytics functionality included in our LMSs.

There are a number of tools already available for you to use in addition to what's bundled with your LMS. Here's a few free ones you could play about with:

Google Analytics: not specifically about “learning” analytics, but it does report data about any website’s traffic and its' sources of usage, as well as statistics about the site’s users, their social network preferences and use of search engines.

SNAPP: a tool that performs real-time social network analysis and visualization of discussion forum activity within an LMS. It's essentially a diagnostic tool that helps you evaluate behavior patterns against learning activity giving you the opportunity to intervene if necessary.

Netlytic: a cloud-based text and social networks analyser that helps to summarise large volumes of text. It can also help you discover social networks from online conversations on sites such as Twitter, Youtube, blogs, online forums and chats. It helps identify the level of student engagement and gauge group relationships and interactions.

There's many other tools available, but these three may help you get your head around the topic if you're looking to get your hands dirty.

So, if learning analytics can make our learning interventions more successful, by helping us make our students more successful, and there are already tools we can use; why are we not all incorporating learning analytics in our organisations and using it to design/refine our courseware & to support our learners.

Let's not beat about the bush, learning analytics can be expensive. Just picture how much data you could be collecting, and the resource you will need to process it all. In addition to the quantity of data, you also need to consider the quality of data. If you're using an old SCORM based LMS, what does the data look like, and how easy is it to process? And what about the activity not carried out via your LMS based courses, e.g. social media and classroom based activity.

Additionally, there are some legals to consider - who owns the data (it's personal information about students), and who has access to it? Does all this processing of personal data fall within your existing data protection and user privacy policies? To help give you some ideas around some of these questions, review the Open University policy on Learning Analytics, and the JISC code of practice.

With the ever increasing use of "big data" in our lives, learning analytics is probably here to stay. If we're lucky, we may already be collecting relevant data (like the Open University), so we can quickly get on with looking at ways to personalise feedback, offer advice for future study, create better services and look to use data to guide future course design. But for many of us, we're not in that position - some of us feel good that we can run reports about course usage. For us, we'll need to sit down with our LMS supplier to review the existing capabilities of our LMS and to get an idea of where their roadmap is going, and determine what other tools may be necessary for us to obtain and process the learning analytics data we need.

If you have examples you are willing to share about how you successful use learning analytics to improve student outcomes, then do get This email address is being protected from spambots. You need JavaScript enabled to view it..